LIV and Mixed Reality

Ever wondered how to make your VR game videos more appealing to your viewers? If yes you might have searched for some videos of what is possible and you found videos of people being shown in the virtual reality. The question is, how is that even possible? Well, there are applications that allow it and today I'll talk about LIV.

LIV is an application that allows for mixed reality capture - meaning that you can put yourself from the real world into the virtual world and then you can either stream it or record via software like OBS or Xsplit. If you don't have the equipment for recording mixed reality you can use an avatar that can be put in the game where you normally stand. LIV can create virtual cameras that can record the avatar in motion. LIV can also be used to enhance first person view because the normal FPV is limited in view and also can make the viewer motion sick. The last feature that I didn't use so I can't comment on it is that you can use a game pad controlled camera while in game, or rather your friend would be controlling it and while you'll playing.

All of these features can make the viewer experience way better and it allows for a better connection between you and the audience. The application is free and is available on Steam. Note that it only works with Steam titles.

I knew that mixed reality videos were a possibility but I expected that one really needs a green screen studio to record them. While having a green screen studio certainly helps you can get fairly good conditions even in your room even without a green screen. You have three options - let AI try to key out the background for you which won't ever be perfect but can be fairly good, use Kinect which can do that as well with better or worse results depending on the conditions in your room, use green screen and any camera. Note that you need at least 2 meters of space in order to be able to easily calibrate the camera. It is not necessary to have that much space but highly recommended (better if there's more of it).

The minimum requirements are i7 or AMD equivalent and GTX 1070. Note that OBS requires a good processor and GPU as well so running on this setup you may need to lower your resolution or quality settings in order to get it working. This pretty much means that you don't want to super sample and your computer should be set to maximum performance (both CPU and GPU).

After downloading LIV you might need to create an account if you want to record without LIV watermark. Then you will need to install a LIV SteamVR driver. To do that just click on the respective button and it will install. Note that SteamVR (you should have that already installed, cannot be running during the installation). When installation is done you may need to restart LIV. Run SteamVR first, then launch LIV.

Then plug in and install your camera and set it aside for a while. Install your preferred streaming software if you don't have it installed already. I use OBS so I will talk about that. I recommend creating a new scene in OBS and adding a Video Capture Device (using the camera you'll use for recording) Alternatively you can use the output view in LIV but it's more convenient to use OBS. You should see the camera feed in OBS and then you can find the right spot from which to record so yout whole body is in the scene. Note that you should have at least 1.6 to 2 m of space in front of the lens.

When you find the right spot click on 'Launch Compositor' in LIV. (Note that in the view of the camera there shouldn't be any shiny objects or anything that reflects light. You might need to use additional lightning sources as well to get rid of shadows and such.)

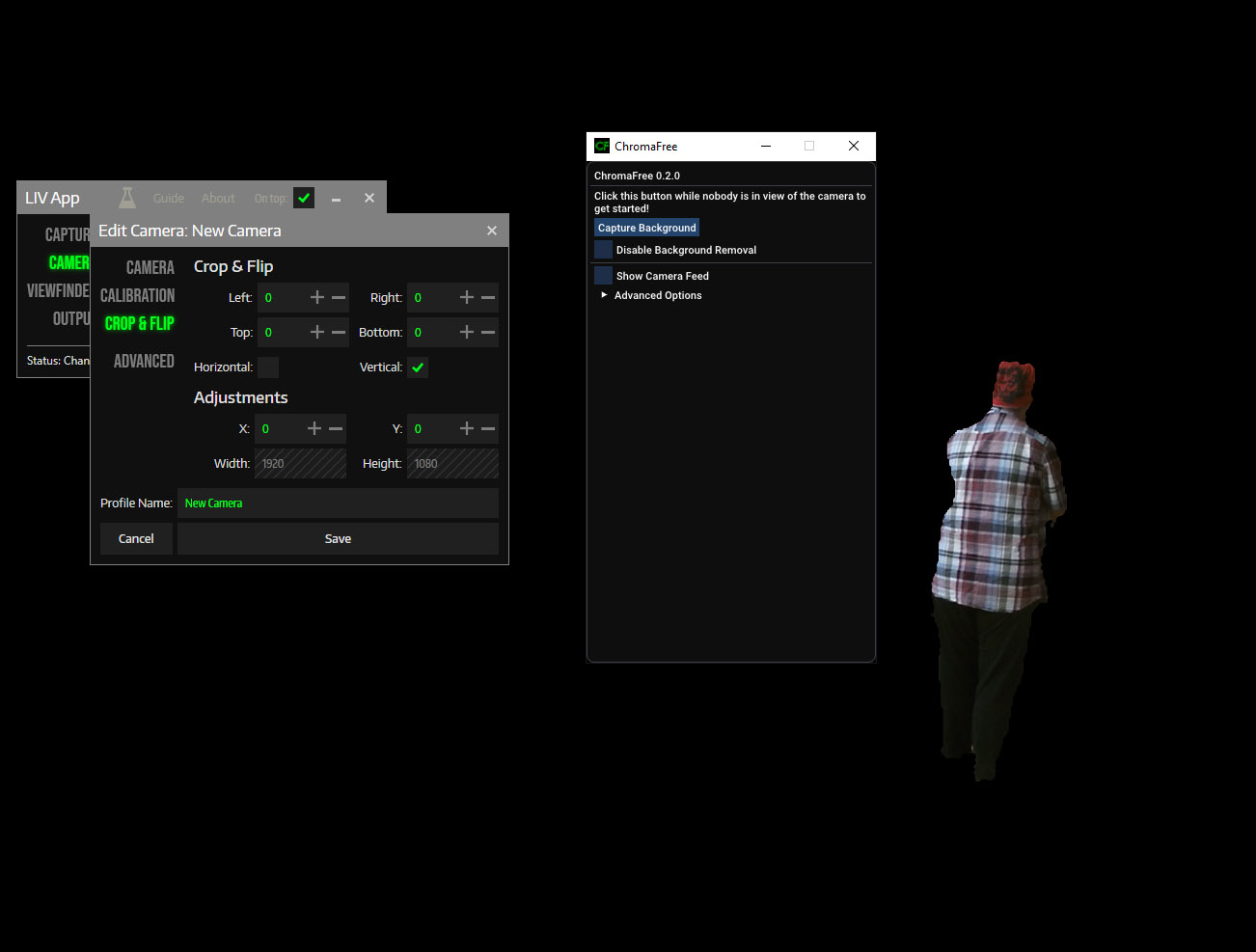

A new window will open. There are four options - Capture, Camera, Viewfinder and Output. Click on Camera and then on the first button showing a Camera with plus in it. Choose the type of device. Choose a physical camera, then in Device choose the specific model. Pick mode as well. You can name the camera profile and then save it. You can also use iPhone with the use of additional LIV app. The iphone has to be connected to the same network as the computer you will be using.

Kinect Camera/ChromaFree seems to have problem with black hair which is why I use the bandana to cover my hair

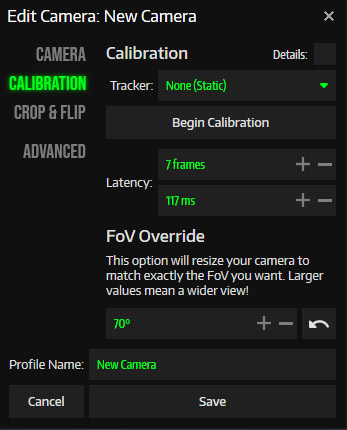

If you are using Kinect you might be affected by a certain bug. You output might be flipped either vertically or horizontally. To fix this go to calibration and Crop & Flip and check the needed flip. You should be able to uncheck it later to keep it the way it should. But do whatever will be necessary (either keep it checked or uncheck it). When you see the output as it should be head over to Calibration. The real fun will begin. Pick a Static tracker and click on Calibration. A VIVR application window will show and you have to press Start Calibration to start the process. The calibration can be done on desktop or in the headset. I find it better in the headset. You will need to grab your controllers and follow the instructions.

In front of you, you should see a big red cross. That should be at the same place your camera lens is. So move right in front of it so the trigger on your controller is right in the middle of the cross and press it. After that a new, smaller cross will appear. Step further away from the camera until LIV shows a distance in green (you should reach the furthest area of your play area). Then align your trigger with the center of the red cross again (you should see that in front of you on the screen). Do the same for the last red cross. You should be as accurate as you can be.

After these three clicks, your virtual controllers should match the location of the real world ones. It may not be the case and you will have to fine tune. To manually edit the calibration follow this: After you perform the three clicks come closer to stand in front of the lens of the camera. Hold one of you controllers in the middle of the frame and adjust the position of the camera using the X/Y/Z. The Z controls the depth and that will primarily be used to match the size of the virtual controllers with the real ones.

Then step back where you did those two red cross clicks and create a T-pose (arms stretched to the sides). Now you use Pitch to move controllers up or down and Yaw to move them right or left. Get one controller close to the real one as possible.

Then do the T-pose again and see how far or close they are. Adjust FOV to put them where they should be.

After this step close to the lens and do the same.

Then go in the middle and do the same. At this point you can use rotation to finally place the controllers where they should be.

Repeat these steps until you are satisfied.

Now go back to Camera, there you should be able to see Device settings under the Mode. Here you can choose if you want to key a certain color out or use AI keying. The color keying has other settings that you can use to get the optimal result. If you are using Kinect it will open ChromaFree window. Leave your prepared scene and click on Capture Background. The background should disappear from the scene and it should just show black instead. It should also show you if you walk into the camera view.

If you are using a green screen and there is more of your room in the view you will have to go to Crop & Flip and crop the output so only the area with green screen can be seen (or rather be transparent at this point already - thus showing just you)

After your calibration is done you can save it and go to Capture. Choose the game you want to record and click on Sync and Launch. Now the game should load and you should see yourself in the output window.

In OBS, create a new scene and add a Window Capture targeting LIV output window. LIV output is video only so you will have to add an audio source the game uses. Since the game should be already running you should see a bar moving in the correct track, if not try each device one by one until you get sound input. Then add anything else you may want to use. If you want to capture the FPV you can either capture the monitor view that may be cropped or use OpenVR OBS plugin to do so which captures the whole mirror surface in full resolution.

In ideal world after doing all this you should be good to record videos. You might encounter some problems though be it wrong room setup or not good enough performance. If your play area is somewhere where it shouldn't but you want to keep the LIV settings you have you can use OVR Advanced Settings to move or rotate the play space. If performance is not good enough to play you may need to change the resolution to something lower or change the FOV to a lower value. These are the two things that play the biggest role when it comes to performance.

Your lightning might be off and some cameras allow for different kind of settings be it exposure or sharpness. Some don't have any of these settings available but LIV itself allows for creating masks or using different kind of lighting that can help your scene.

While calibrating you might have also noticed that audio and video to not really match. You may need to set a delay for the VR capture. The VR video Latency should be set and you can input it in LIV under Calibration. Just move your arm and try to match the speed of it with the virtual controller. For audio, you can calculate it after you figure out the video delay. The formula for it is audioLatencyAdjustment = (1000 / outputFPS) x frames. You get a value in ms and that should be added to your audio track in OBS. It can be set via Advanced Audio Options. If you will be recording videos don't worry if you forget about it as this is something you can change in your video later with appropriate software (I use VirtualDub for this).

OBS Settings

These settings should be fine when using GTX 1070

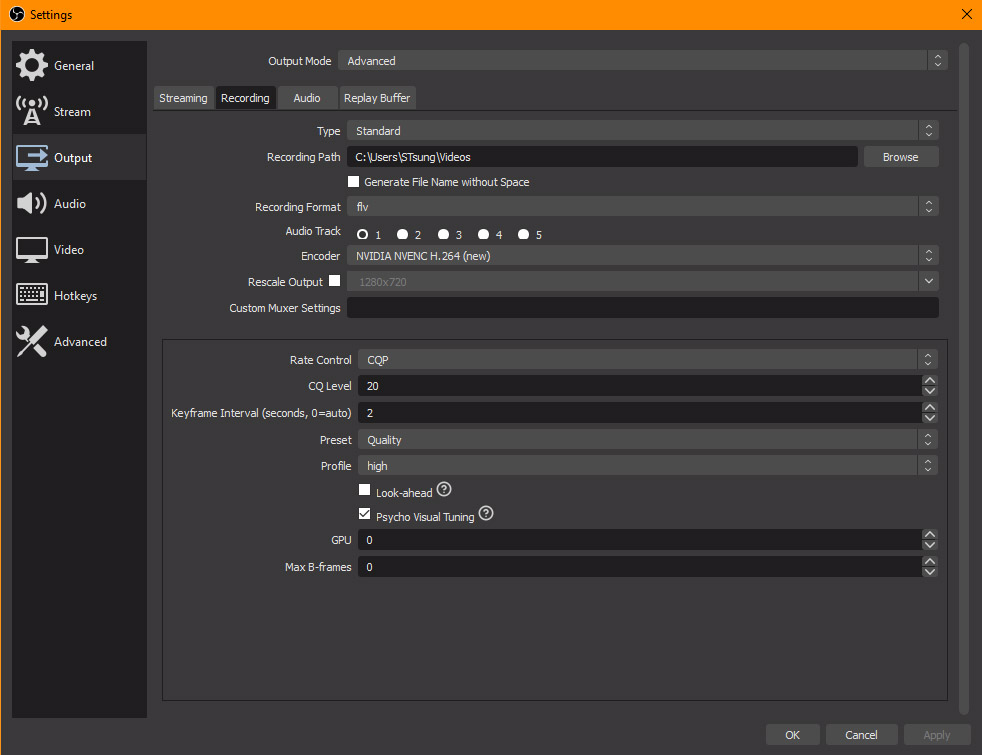

I didn't speak about Output settings because that is something you will have to find out for yourself as it will depend on your system configuration. I will now write about OBS settings a bit so you have an idea what can work if you have GTX 1070 or better graphics card.

My upload speed is 10Mbps which means that I can normally stream at 1080p60 at 6000 Kbps Bitrate. Ryzen 7 and Titan X are very capable of it but when it comes to high motion and two to three very demanding inputs using the GPU it may not work out and it actually didn't for me. For that I had to lower the resolution to 1280x720 (I set that both in LIV and OBS so it wouldn't try to rescale by some accident or anything).

If you don't have a version higher than OBS 23.0 and you have a newer card download it. It uses new NVENC (which may not perform well if you have GTX 1070) which uses the GPU in some more efficient way. For streaming I use (if not using an avatar, use NVENC when using an avatar) - Output of 1280x720, 60 FPS, x264 encoder, CBR 6000 bitrate (twitch limits it for me to 3500), 0 keyframe interval, CPU usage Very Fast, Profile High. For recording I use new NVENC, CQP 16, Keyframe Interval 2, Preset Max Quality (2-pass encoding), Profile High, Psycho Visual Tuning turned on, Look Ahead turned off, GPU 0, Max B-Frames 0.

If your dedicated video memory is lower you can use 1-pass encoding (Quality) and lower the quality to something between CQP 20-23. If your graphics card is not good enough you'll have to be cutting on resolution and FPS but if it comes down to that your computer is most probably not VR ready so you shouldn't probably run into that problem.

Getting your settings in OBS right is not the only thing that can clog the performance. There are few things you can do when it comes to OBS. First turn Game Mode On in Windows if you haven't already. Next see if Game DVR is running. That can be found under Gaming Settings. Turn Game DVR off - Turn off the Game Bar and then go to Captures and turn off Background Recording. If you can't find these settings you can use local group policy editor to disable it or disable it in RegEdit.

Set OBS to run as Admin. Find the OBS executable, right click to go to Properties ->Compatibility Settings, check Run this program as Administrator.

Hopefully this post will help you set up your stream

Thank you for reading

S'Tsung (stsungjp @ Twitter)